These days, software developers have a wide range of choices when it comes to database technologies while building modern applications. Requirements like the horizontal scalability, high availability and flexibility of a data model drive the need for database technologies designed to solve different set of problems than traditional relational databases. Azure Cosmos DB is Microsoft s globally distributed, multi-model, fully managed NoSQL database.

One of the interesting features of Cosmos DB is the easily configurable global distribution where data gets transparently replicates across the configured regions. Plus, you can select from 5 different data consistency levels. Another unique aspect of the service is a multi-model API approach where you can select your preferred API and data model when creating your Cosmos DB account. This includes options like SQL API, MongoDB, Cassandra, Gremlin and Azure Table key-value API.

Configuring Cosmos DB Database

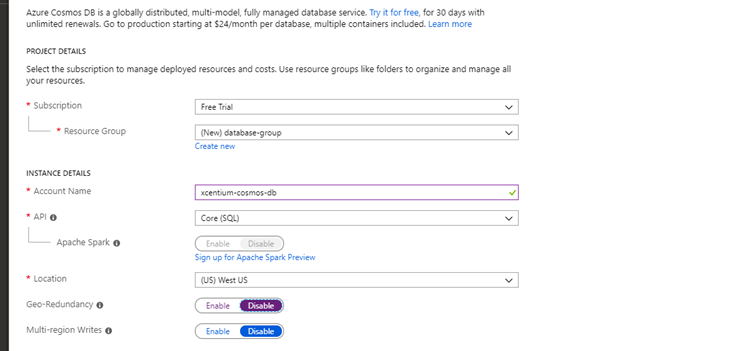

It s easy to get started with Cosmos DB by signing up for a 30-day trial at https://azure.microsoft.com/en-us/try/cosmosdb/. When creating a new account, you must specify a resource group name, a unique account name, Azure region and select the API model with which you want to work.

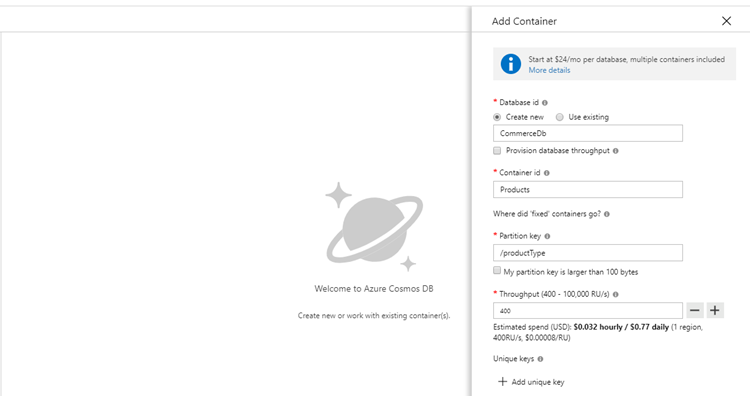

The next step is to create a database and add a new container. A container is equivalent to a document collection in a typical NoSQL database or a table in a relational database. You do this by clicking Add Container button on Overview screen or by going to Data Explorer. In this example, I will create a simple database that will contain a list of products for a fictional commerce application.

One important consideration when modeling your containers is to remember that you are not building a relational data model. Cosmos DB does not support cross-container joins, so the best practice is to build denormalized schema with nested documents. If you want to learn more about data modeling in Azure Cosmos DB, please refer to this article - https://docs.microsoft.com/en-us/azure/cosmos-db/modeling-data. Another aspect of the container creation is that you must specify a partition key. A good partition key will have a wide range of values and evenly distribute the data across multiple logical partitions. Some of the examples might include Category, Product Type or Physical Location attributes.

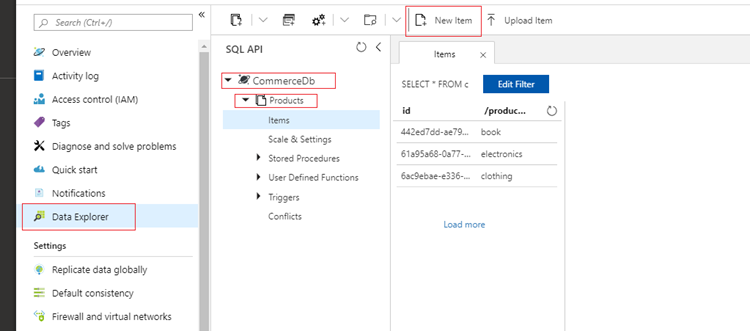

After the database and container are created, add a couple test items using Data Explorer. You do this by expanding your database and then the collection name under Data Explorer. Click on New Item button.

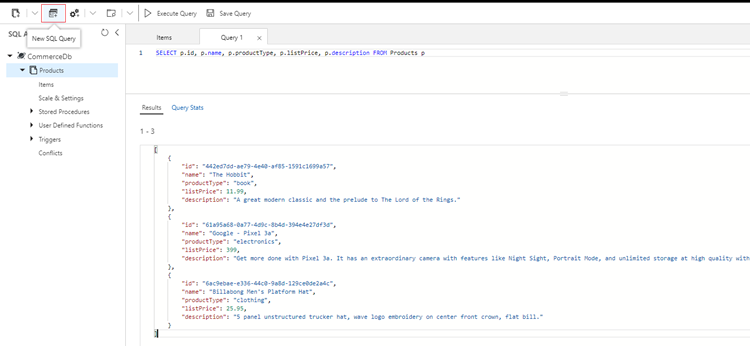

After adding a couple of products, we can test our SQL skills by writing a simple query in New SQL Query window. You will notice that you need to alias the container to properly identify your attributes and that the attribute names are case-sensitive.

Cosmos DB .NET SDK

Now we are ready to create a Visual Studio solution to interact with our newly created Cosmos DB database. Here I will be using .NET Framework 4.7.2 as the target framework. Also, Azure Cosmos DB .NET SDK must be installed before we continue. NuGet package manager console can be used for this:

PM> Install-Package Microsoft.Azure.DocumentDB

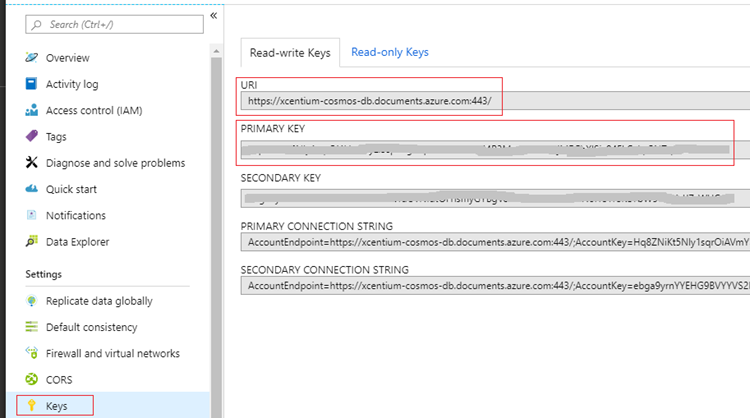

Another requirement is to retrieve the database endpoint URI and Primary Key values in order to establish the connection. You can find those under Settings > Keys > Read-write Keys in Azure Portal. You can add those values to a config file in your project so they can be referenced later in the code.

Next, to demonstrate CRUD operations, I will create a simple repository class that will encapsulate communication with the Cosmos DB database container. We will need to create an instance of DocumentClient class, which requires the database endpoint URI and auth key (primary key) as the dependencies. Also, all the communication must be asynchronous and non-blocking. Please see the complete code below.

public class ProductRepository

{

private DocumentClient _client;

private bool _initialized;

private readonly string DatabaseId = "CommerceDb";

private readonly string ContainerId = "Products";

public async Task InitializeAsync()

{

if (!_initialized)

{

var endpoint = ConfigurationManager.AppSettings["endpoint"];

var authKey = ConfigurationManager.AppSettings["authKey"];

_client = new DocumentClient(new Uri(endpoint), authKey);

await EnsureDatabaseExists();

await EnsureContainerExists();

_initialized = true;

}

}

public async Task< />> GetProductsUsingFilterAsync(

Expression< />> filter)

{

await InitializeAsync();

var products = new List();

var query = _client.CreateDocumentQuery(

UriFactory.CreateDocumentCollectionUri(DatabaseId, ContainerId),

new FeedOptions() { EnableCrossPartitionQuery = true })

.Where(filter)

.AsDocumentQuery();

while (query.HasMoreResults)

{

products.AddRange(await query.ExecuteNextAsync());

}

return products;

}

public async Task CreateProductAsync(Product product)

{

await InitializeAsync();

return await _client.CreateDocumentAsync(

UriFactory.CreateDocumentCollectionUri(DatabaseId, ContainerId),

product);

}

public async Task UpdateProductAsync(string id, Product product)

{

await InitializeAsync();

return await _client.ReplaceDocumentAsync(

UriFactory.CreateDocumentUri(DatabaseId, ContainerId, id),

product);

}

public async Task DeleteProductAsync(string id, string productType)

{

await InitializeAsync();

await _client.DeleteDocumentAsync(

UriFactory.CreateDocumentUri(DatabaseId, ContainerId, id),

new RequestOptions() { PartitionKey = new PartitionKey(productType) });

}

private async Task EnsureDatabaseExists()

{

await _client.ReadDatabaseAsync(

UriFactory.CreateDatabaseUri(DatabaseId));

}

private async Task EnsureContainerExists()

{

await _client.ReadDocumentCollectionAsync(

UriFactory.CreateDocumentCollectionUri(DatabaseId, ContainerId));

}

}

EnsureDatabaseExists and EnsureContainerExists methods are used to validate that the database and container exists, if not, you will get a DocumentClientException with the status code of NotFound. GetProductsUsingFilterAsync method call allows to pass a filter in the form of a lambda expression since generally it's not very practical to return all the data when working with a large, distributed dataset. And the last item to point out is that DeleteDocumentAsync operation requires a partition key. In this example we need to pass a corresponding product type value.

We can't forget to create the model class to define our Product item. I have added JsonProperty attribute, so the items are serialized into camelCase when adding them to the container.

public class Product

{

[JsonProperty(PropertyName = "id")]

public string Id { get; set; }

[JsonProperty(PropertyName = "productType")]

public string ProductType { get; set; }

[JsonProperty(PropertyName = "name")]

public string Name { get; set; }

[JsonProperty(PropertyName = "description")]

public string Description { get; set; }

[JsonProperty(PropertyName = "listPrice")]

public double ListPrice { get; set; }

[JsonProperty(PropertyName = "availableQuantity")]

public int AvailableQuantity { get; set; }

}

Conclusion

I hope this example gives you a good idea of how you might build a back-end of your .NET application with Azure Cosmos DB. While not every scenario requires a highly scalable NoSQL database, Cosmos DB is a good option when you need to distribute your data across multiple geographical regions or elastically scale your database when you need more throughput and storage.